Explanation of Each Option

Option A: Configure two routes for 10.0.0.0/8 with different priorities, each pointing to separate network virtual appliances.

- Incorrect.

- This creates an active/passive failover setup using route priorities. The lower-priority route is only used if the higher-priority next hop becomes unavailable.

- It does not provide simultaneous load balancing across both NVAs, violating the active/active requirement.

- It also lacks automatic health-based removal of a failed NVA and proper flow symmetry for stateful appliances.

Option B: Configure an internal HTTP(S) load balancer with the two network virtual appliances as backends. Configure a route for 10.0.0.0/8 with the internal HTTP(S) load balancer as the next hop.

- Incorrect.

- Internal HTTP(S) Load Balancers are Layer 7 proxy-based and limited to HTTP/HTTPS traffic. They cannot handle general IP traffic ("all traffic").

- Google Cloud does not support using an L7 proxy load balancer as a next hop for arbitrary traffic routing.

- This option fails both the traffic type and next-hop compatibility requirements.

Option C: Configure a network load balancer for the two network virtual appliances. Configure a route for 10.0.0.0/8 with the network load balancer as the next hop.

- Correct (with modern GCP terminology: https://docs.cloud.google.com/load-balancing/docs/internal/setting-up-internal-next-hop-tags)

- In current Google Cloud documentation, the recommended solution for this exact use case is an internal passthrough Network Load Balancer.

- "Network Load Balancer" in this context refers to the internal passthrough variant (also historically called internal TCP/UDP load balancer in the console).

- Key points supporting "all traffic":

- When used as a next hop in a static route, an internal passthrough Network Load Balancer forwards all traffic and all IP protocols (TCP, UDP, and other protocols such as ICMP) to the backend NVAs, regardless of the protocol specified on the forwarding rule.

- Official docs state: "All protocols are supported with internal passthrough Network Load Balancer as next hop" and it processes packets for all supported traffic.

- Traffic is distributed across both healthy NVAs simultaneously using consistent hashing (active/active load balancing).

- Health checks on the backend service automatically remove a failed NVA, ensuring seamless failover without route changes.

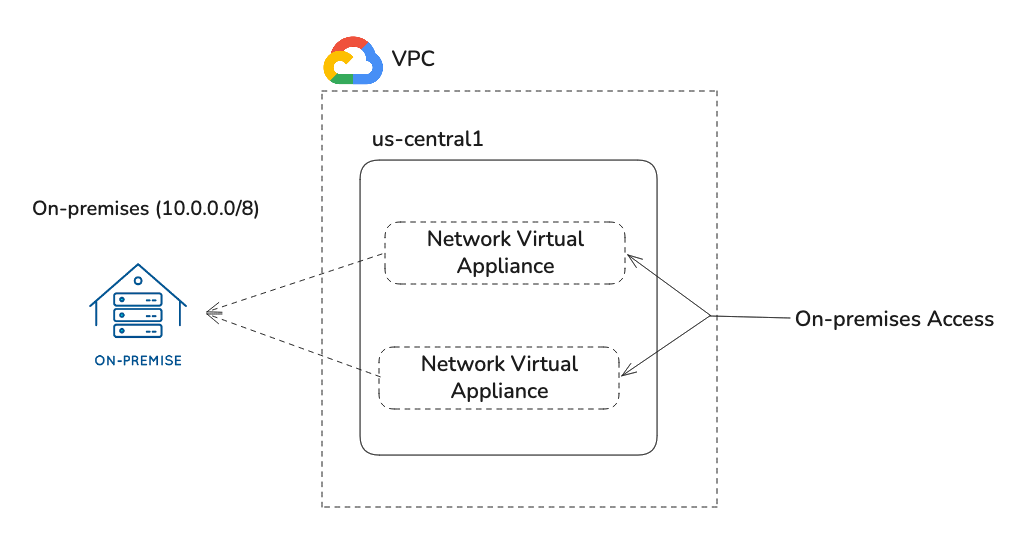

- This matches the official architecture for inserting highly available, scaled-out NVAs for hybrid connectivity and on-premises access.

Option D: Configure an internal TCP/UDP load balancer with the two network virtual appliances as backends. Configure a route for 10.0.0.0/8 with the internal load balancer as the next hop.

- Technically correct but less precise with current terminology.

- This describes the same underlying resource as Option C (the internal passthrough Network Load Balancer).

- Older documentation and console labels often still use "internal TCP/UDP load balancer". However, current official guides consistently refer to it as the internal passthrough Network Load Balancer.

- Because the question uses the broader term "network load balancer" in Option C and the requirement explicitly calls for "All traffic" (not limited to TCP/UDP), Option C is the better match.

- Both C and D point to the same technical solution, but C aligns more closely with today's preferred naming and your emphasis on "all traffic".

Why Option C is Now Preferred

- Google Cloud documentation (updated as recently as March 2026) explicitly titles sections as "internal passthrough Network Load Balancer as next hop".

- It clearly supports all protocols when used as a next hop, directly addressing the "All traffic" requirement.

- External Network Load Balancers still cannot be used as next hops, but the internal passthrough version is fully supported for NVA scenarios.

- This setup is the standard pattern for active/active NVA insertion with redundancy in hybrid networks.

Exam Takeaways (Updated for 2026)

- For routing all IP traffic through multiple NVAs with active/active load balancing and HA → use a static route with an internal passthrough Network Load Balancer as the next hop.

- NVAs should be placed behind a managed instance group (MIG) for autoscaling.

- The load balancer provides symmetric hashing for flow symmetry (important for stateful firewalls).

- Watch for terminology: Questions may use "Network Load Balancer", "passthrough Network Load Balancer", or the older "internal TCP/UDP".

- Always confirm it is internal (not external) and passthrough (not proxy-based).

Reference Answer

C

This aligns with current Google Cloud best practices and official documentation for using an internal passthrough Network Load Balancer as a next hop to achieve all-traffic routing, active/active load balancing, and automatic failover through NVAs.

Note: In some older exam question banks, Option D was listed as correct due to legacy naming. With the evolution of GCP terminology toward "internal passthrough Network Load Balancer", C better reflects the modern and precise answer, especially given the "All traffic" requirement.