Explanation:

The error indicates a token overflow issue due to the new model's shorter context length. Option C (decreasing chunk size) reduces the number of tokens per embedded document, directly addressing the overflow by making each chunk fit within the context limit. Option D (reducing retrieved records) decreases the total tokens in the prompt by limiting the number of document chunks included. Both approaches manage prompt size without changing the model. Option A (smaller embedding model) doesn't affect prompt token count. Option B (reducing output tokens) addresses output length but not input overflow. Option E (retraining with ALiBi) requires model changes, which violates the constraint.

Ultimate access to all questions.

No comments yet.

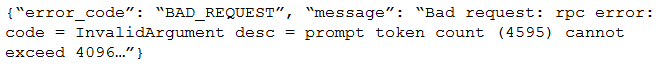

After switching the response generation LLM in a RAG pipeline from GPT-4 to a self-hosted model with a shorter context length, the following error occurs: //IMG//

Without changing the response generation model, which TWO solutions should be implemented? (Choose two.)

A

Use a smaller embedding model to generate embeddings

B

Reduce the maximum output tokens of the new model

C

Decrease the chunk size of embedded documents

D

Reduce the number of records retrieved from the vector database

E

Retrain the response generating model using ALiBi