Explanation:

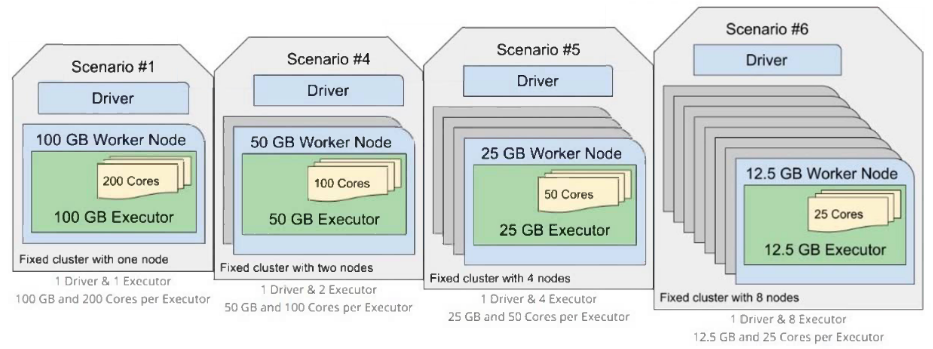

The question asks which cluster configuration will fail to ensure completion of a Spark application if a worker node fails. Scenario #1 uses only 1 executor with 100GB RAM and 200 cores. If the single worker node running this executor fails, there are no other executors available to continue processing tasks, causing the entire application to fail. In contrast, the other scenarios distribute the compute resources across multiple executors and worker nodes, providing redundancy that allows the application to continue even if one worker node fails. The community discussion with 100% consensus and 3 upvotes confirms that Scenario #1 lacks fault tolerance due to having only one executor.

Ultimate access to all questions.

No comments yet.

Which of the following cluster configurations will fail to ensure the completion of a Spark application in the event of a worker node failure?

Note: Each configuration has roughly the same compute power, using 100GB of RAM and 200 cores.

A

Scenario #5

B

Scenario #4

C

Scenario #6

D

Scenario #1

E

They should all ensure completion because worker nodes are fault tolerant