Explanation:

The solution does not meet the goal because it uses mlflow.log('AUC', auc) instead of the Azure ML SDK's run.log('AUC', auc). Hyperdrive in Azure ML requires metrics to be logged through the Azure ML Run context (Run.get_context()) to track and optimize hyperparameters effectively. The community discussion, including highly upvoted comments (e.g., 38 upvotes) and references to Microsoft documentation, confirms that run.log() is the correct method for Hyperdrive to access the AUC metric. While MLflow logging is supported in Azure ML for general experiment tracking, it is not directly integrated with Hyperdrive's optimization mechanism in this context. Thus, the proposed solution is insufficient.

Ultimate access to all questions.

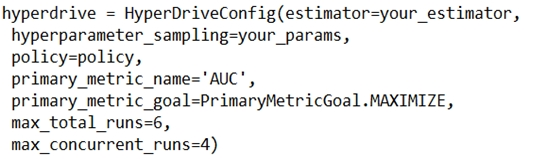

You are using Azure Machine Learning to train a classification model and have configured HyperDrive to optimize the AUC metric. You plan to run a script that trains a random forest model, where the validation data labels are in a variable named y_test and the predicted probabilities are in a variable named y_predicted.

You need to add logging to the script to enable Hyperdrive to optimize hyperparameters for the AUC metric.

Proposed Solution: Run the following code:

from sklearn.metrics import roc_auc_score

import mlflow

auc = roc_auc_score(y_test, y_predicted)

mlflow.log('AUC', auc)

from sklearn.metrics import roc_auc_score

import mlflow

auc = roc_auc_score(y_test, y_predicted)

mlflow.log('AUC', auc)

Does the solution meet the goal?

A

Yes

B

No

No comments yet.