Explanation:

SaleKey column for data retrievalSaleKey ensures even data distribution across all compute nodes, preventing data skew that could create performance bottlenecks.SaleKey in WHERE clauses, hash distribution enables query predicate pushdown, allowing each node to process only relevant data without cross-node data movement.SaleKey ensures queries targeting specific sale keys are processed locally on relevant nodes.Option A (Hash distributed table with clustered index):

Option C (Round robin distributed table with clustered index):

SaleKey, leading to unnecessary data movement.Option D (Round robin distributed table with clustered Columnstore index):

SaleKey) for filtering.Option E (Heap table with distribution replicate):

This solution follows Azure Synapse Analytics best practices:

The combination of hash distribution on the frequently queried SaleKey column with the analytical efficiency of clustered columnstore index provides the optimal performance architecture for this 10 TB fact table scenario.

Ultimate access to all questions.

No comments yet.

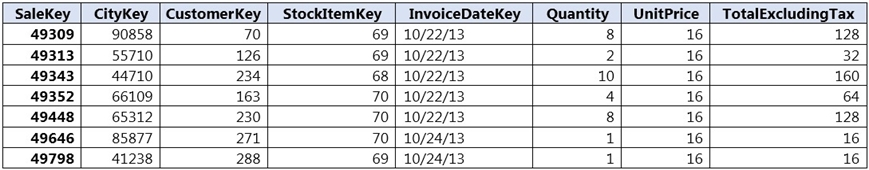

You have a large 10 TB fact table in Azure Synapse Analytics. Queries primarily use the SaleKey column to retrieve data, as shown in the provided table.

To distribute this large fact table across multiple nodes for optimal performance, which distribution technology should you use?

A

hash distributed table with clustered index

B

hash distributed table with clustered Columnstore index

C

round robin distributed table with clustered index

D

round robin distributed table with clustered Columnstore index

E

heap table with distribution replicate